GULYÁS, Gábor György, Ph.D.

Blog

Behavioral fingerprinting in iOS 9 and tracking the Tor Browser users (part 2 of 2)

2016-06-13 | Gabor

In the previous post we have discussed in details why access to attributes have been limited in the Tor Browser and iOS 9. We have also discussed a couple of attack schemes for which we argue that they are still viable despite the limitation. Now we will see if it is really the case.

[This is part of a series discussing results of our new paper.]

Algorithms and data sources

In the paper we showed that targeted and general fingerprinting attacks are NP-hard in general, and the best polinomial time approximations are greedy heuristics. Therefore, we propose two greedy algorithms for which you can find the implementations here (and their pseudocode in the paper). We evaluated these attacks over a smartphone application installation dataset of 54,893 users and over browser attribute dataset of 43,656 users (restricted to screen sizes and font lists).

(We introduced a third attack type in the paper: general fingerprinting can be used for selecting the sensitive points of mass de-anonymization of locations datasets. We omit discussion of this attack here; see the paper for more details.)

Evaluation of the iOS 9 scheme

The smartphone application dataset

Our dataset contained the list of installed smartphone applications per user, where identifiers were removed, and each application name was replaced with its SHA1 hash. It contained 54,893 records, one record per user, which were composed of the list of applications installed and run by the user (system applications were removed). See further statistical details in figure below.

Fingerprinting individuals

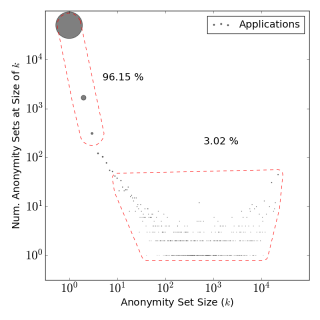

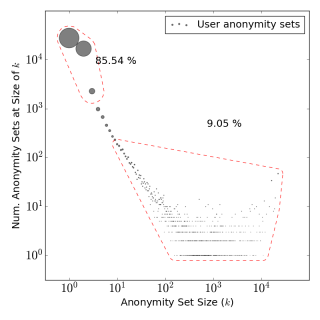

We calculated the targeted fingerprint for each user in our dataset, and on average, 2.3 applications were enough to construct the fingerprint. While these fingerprints were not always pointing to a single user, in most cases (96.15%) a fingerprint characterized at most three users. (Note: a fingerprint is not necessarily unique; if there are two users, for example, who are only distinguished by four applications, those four apps are going to be their fingerprint.) We checked if the protection scheme is viable but the problem is with the constraint (limit number): with decreasing the app detection limit to 2 applications, still most (85.54%) users were almost uniquely characterizable with fingerpints. This clearly shows that the fault is in the concept, not in the setting.

|

Query limit: 50 applications |

Query limit: 2 applications |

General fingerprinting

Creating a general fingerprint to create a behavioral identifier is a more difficult issue. While this attack could work even on iOS 9 (the apps for identification just need to be declared in advance), for our dataset approx. 20 applications were needed to (almost) uniquely identify one third of our users. See the animation below how the fingerprint length characterizes uniqueness.

Evaluation of anonymity in the Tor Browser

The browser property dataset

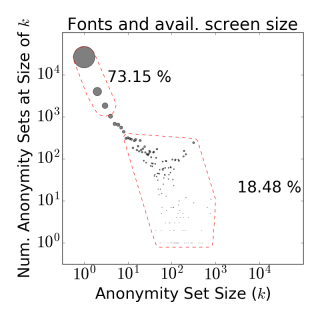

Our dataset contained the list of installed fonts, the screen size and available screen size per user. The data collection was done on fingerprint.pet-portal.eu where users could test and compare their OS fingerprint in different browsers. More than 107,102 profiles were collected between 2012-04-05 and 2015-09-22, of which we only used a subset of users and attributes after cleaning the data (e.g., removing possible duplicates). As a result, we had 43,656 records, which contained the list of detected fonts, and the screen and available screen sizes. Further statistical details are in the figure below.

Targeting individual browsers

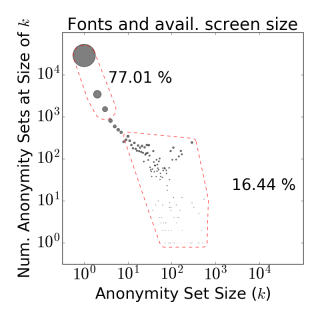

The targeted fingerprinting attack presumes that an attacker is looking for someone with a know identity, where the browser fingerprint data was also previously collected (check out the attack setup here). While the font set alone would do little harm (but still less then 40% are almost unique), if we consider including the available screen size, then the majority (77%) becomes almost uniquely identifiable with fingerprints. (Also giving an insight why TBB yields an alert when you maximize the window.)

|

Fonts query limit: 10 |

Here again, decreasing the font limit shows that the problem is with the concept, not the setting: having only a limitation of 5 fonts per page would still leave almost the majority vulnerable to identification.

Tracking Tor Browser Users with General fingerprinting

It turned out that general fingerprinting was a real threat to Tor Browser users: having roughly one third (32.83%) of users identifiable means that TBB can only be an anonymous browser for 67% of its users, and only when the traffic of the site is question is large enough.

Having a smaller set of TBB visitors per site are more easily identifable. This could have been a threat to TBB, as its daily user base should be roughly around two millions users that breaks down to small sets when talking about the number of TBB visitors per site.

Conclusion

I think the most interesting and important result we had here is to show that limiting access to attributes is not a good idea, as the concept has flaws. While limiting access clearly enables some privacy protection, like it limits the discoverability of user preferences in cases as of the curious Twitter application, but it does not protect against other types of attack. We hope that our work can make a change in the state-of-the-art and also that future products would use other means of protection – let's hope for the best.

If you are interested in checking out further resources, we also provide code for our paper in this repository.

Tags: anonymity, tracking, uniqueness, pet symposium, paper, fingerprinting

Blog tagcloud

CSP (1), Content-Security-Policy (1), ad industry (1), adblock (1), ads (1), advertising wars (1), amazon (1), announcement (1), anonymity (9), anonymity measure (2), anonymity paradox (3), anonymity set (1), boundary (1), bug (2), code (1), control (1), crawling (1), data privacy (1), data retention (1), data surveillance (1), de-anonymization (2), definition (1), demo (1), device fingerprint (2), device identifier (1), disposable email (1), ebook (1), el capitan (1), email privacy (1), encryption (1), end (1), extensions (1), fairness (1), false-beliefs (1), fingerprint (3), fingerprint blocking (1), fingerprinting (3), firefox (1), firegloves (1), font (1), future of privacy (2), google (1), google glass (1), home (1), hungarian keyboard layout (1), inkscape (1), interesting paper (1), internet measurement (1), keys (1), kmap (1), latex (1), location guard (1), location privacy (1), logins (1), mac (1), machine learning (3), neural networks (1), nsa (2), osx (2), paper (2), pet symposium (2), plot (1), price of privacy (1), prism (1), privacy (8), privacy enhancing technology (1), privacy-enhancing technologies (2), privacy-enhancing technology (1), profiling (2), projects (1), raising awareness (1), rationality (1), re-identification (1), simulation (1), social network (2), surveillance (2), tbb (1), thesis contest (1), tor (1), tracemail (1), tracking (12), tracking cookie (1), transparency (1), tresorit blog (4), uniqueness (3), visualization (1), web bug (3), web privacy (3), web security (1), web tracking (3), win (1), you are the product (1)