GULYÁS, Gábor György, Ph.D.

Blog

Behavioral fingerprinting in iOS 9 and tracking the Tor Browser users (part 1 of 2)

2016-06-06 | Gabor

We have a new paper accepted at PET Symposium entitled as 'Near-Optimal Fingerprinting with Constraints' (with Gergely Ács and Claude Castelluccia). In this paper, we argue that it is inefficient to protect user privacy with limiting access to user attributes, as it leaves re-identification attacks and tracking possible. This protection method is in fact used in contemporary tech products: limiting app detection queries was introduced with iOS9 to iPhones and iPads, and was in use for a long period in the Tor Browser.

[This is part of a series discussing results of our new paper.]

What is attribute query limitation and why they use it?

Let's go in historical order and start with the Tor Browser. It all started in 2010 when the Panopticlick project demonstrated that attributes of web browser can be extracted in a way that would make them unique. This extract, that characterizes a web browser individually, is called fingerprint. This had a huge impact: if browser fingerprinting is possible, then websites can identify returning visitors, and tracking companies who are present of multiple websites can identify surfers across the web. Furthermore, while deleting tracking cookies is relatively an easy task, changing the browser fingerprint is not trivial at all.

As it became clear that browser fingerprinting is a serious issue, and detecting available fonts will be a key part of future web tracking methods, the Tor Browser (or Tor Browser Bundle, TBB) developer community decided to protect their users with a simple countermeasure: limiting the number of fonts that a webpage can load (somewhere around 2012). By default, this was limited to 10.

However, it turned out their implementation can be circumvented, and lately developers could not port their solution to newer Firefox versions (which we also noticed in November 2015). Due to these issues, developers decided to change the protection method to shipping a fixed set of fonts with their browser. As the original protection was rejected due to implementational difficulties, and not for conceptual reasons, we decided to include the analysis of this method in our paper.

Interestingly, in another scenario Apple also decided to protect iOS users' privacy in a similar manner: iOS 9 introduced a new limitation on how applications can detect other applications' presence on a device. (Which was probably in response to the application list detection started by Twitter.) However, the applicaiton list not only reveals details of the phone owner's preferences, but as we discuss it later, the applications list can be fingerprinted in a similar manner as web browsers: the extracted application list will then identify the user of the phone.

As a result of the API changes in iOS 9, when a user upgrades to it, the following limits come into effect: old applications (linked against iOS 8 and before) could detect the presence of at most 50 other applications, while new applications are forced to declare in advance which other applications they want to detect.

(See Section 5 in the paper if you are interested in more details. We discuss these issues in more details and more references/links.)

Tracking Tor Browser users

In case of the Tor Browser, the goal of the protection is to prevent de-anonymization and linking attacks. These attacks are also covered by the attacker model in the TBB design description.

We can talk about de-anonymization when someone's public identity (e.g., a Facebook profile observed outside TBB) can be linked to an anonymous session that is observed while that person is using TBB. In practice, the targeted person visits Facebook (or other another similar site), when the list of available fonts on his computer is extracted and linked to his identity (steps 2 and 3). Then, when he is assumed to contact a (sensitive) website through Tor Browser, an attacker can try to reveal his identity by querying at most 10 fonts (step 4). We call this targeted fingerprinting in the paper.

In the case of linking attacks, the user does not need to be observed outside the Tor Browser. It can be also desirable for a malicious party to create a fingerprint of at most 10 fonts (and some of the available other attributes) to track visitors who are using Tor Browser. This could be used to identify returning Tor Browser visitors and to track their activities. We analyzed that to what extent this is possible.

Let us think of a malicious website who has access a large fingerprint database: with this the website can select fonts distinguishing users the most. In other words, the attacker would select a list of fonts that makes the smallest, equally sized sets of similar users (equivalence clases). Thus, if he queries the presence of these fonts to a Tor Browser user, the resuling binary vector will be unique with high probability (on a medium sized set of Tor Browser users). We call this approach general fingerprinting in the paper.

Behavioral fingerprinting on iOS 9

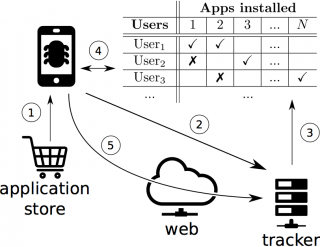

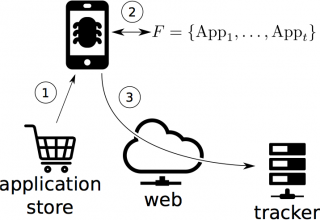

These attack work similarly on iOS 9 as well. In case of targeted fingerprinting, an attacker obtains information on which applications a selected user has installed, and uses this to generate a uniquely identifying personal fingerprint for that particular user. In case general fingerprinting, the attacker need to obtain a dataset on app usage statistics, from which he can select some applications for the fingerprint. The figures below explain how these attack are executed.

|

Targeted fingerprinting attack scheme |

General fingerprinting attack scheme |

What makes general fingerprinting even more interesting, that such identifiers can serve as a behavioral identifier used for web tracking. With this demo, you can simply test on your iPhone or iPad that even if privacy settings are enforced, app generated identifiers can be leaked to the web by simply opening a URL. In the demo, the ID can be an arbitrary base64 encoded string. The figure below shows the details how the leak is acutally done.

In the next blog post I will present our main findings on these attacks.

If you are interested in checking out further resources, we also provide code for our paper in this repository.

Some icons we used in the illustrations were made by Freepik (from www.flaticon.com).

Tags: anonymity, tracking, uniqueness, pet symposium, paper, fingerprinting

Blog tagcloud

CSP (1), Content-Security-Policy (1), ad industry (1), adblock (1), ads (1), advertising wars (1), amazon (1), announcement (1), anonymity (9), anonymity measure (2), anonymity paradox (3), anonymity set (1), boundary (1), bug (2), code (1), control (1), crawling (1), data privacy (1), data retention (1), data surveillance (1), de-anonymization (2), definition (1), demo (1), device fingerprint (2), device identifier (1), disposable email (1), ebook (1), el capitan (1), email privacy (1), encryption (1), end (1), extensions (1), fairness (1), false-beliefs (1), fingerprint (3), fingerprint blocking (1), fingerprinting (3), firefox (1), firegloves (1), font (1), future of privacy (2), google (1), google glass (1), home (1), hungarian keyboard layout (1), inkscape (1), interesting paper (1), internet measurement (1), keys (1), kmap (1), latex (1), location guard (1), location privacy (1), logins (1), mac (1), machine learning (3), neural networks (1), nsa (2), osx (2), paper (2), pet symposium (2), plot (1), price of privacy (1), prism (1), privacy (8), privacy enhancing technology (1), privacy-enhancing technologies (2), privacy-enhancing technology (1), profiling (2), projects (1), raising awareness (1), rationality (1), re-identification (1), simulation (1), social network (2), surveillance (2), tbb (1), thesis contest (1), tor (1), tracemail (1), tracking (12), tracking cookie (1), transparency (1), tresorit blog (4), uniqueness (3), visualization (1), web bug (3), web privacy (3), web security (1), web tracking (3), win (1), you are the product (1)